From Rote Answers to Bold Ideas: Adjusting AI's Creative Dial

As I kick off the Retro Matt newsletter, I’m on Lesson 1 of a 20-lesson AI course inspired by this tweet. The course was built using Claude. Here are today’s learnings, outputs and consternations, not necessarily in that order.

Today I was surprised by how small changes in inputs drive big changes in results. Let’s dig in and start with a bit of background on how AI operates.

Tokens

What to know

(No, not these tokens, sadly.)

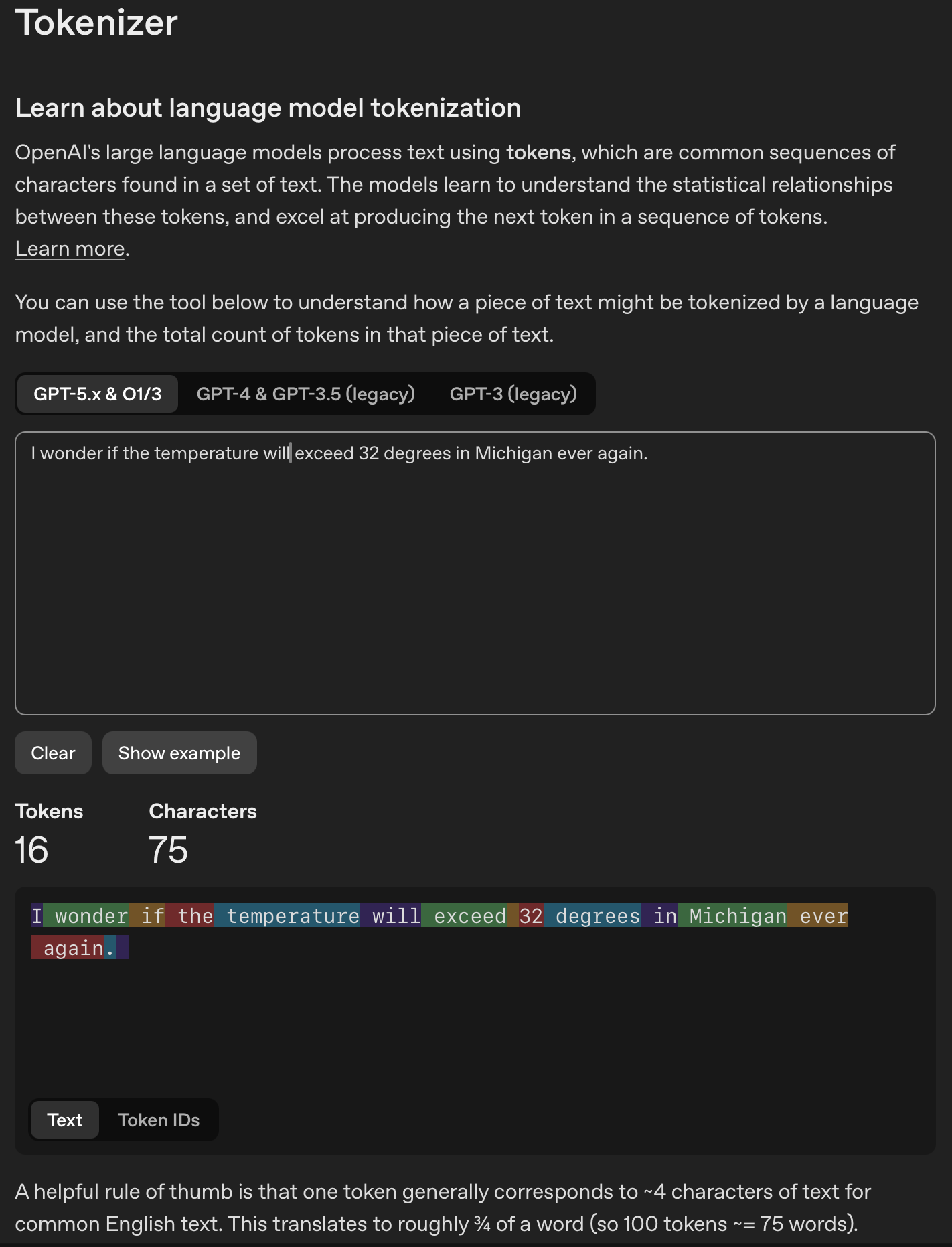

Tokens are what make the AI world “understand” text. Using the Tokenizer tool in ChatGPT, you can see what this looks like:

The AI then looks for language relationships between tokens to discern meaning and create relevant responses.

The exercise

Played with this tool a bit to understand how an LLM "understands” text. Also learned about how many tokens various models can hold in memory.

For example, Claude can hold 200,000 tokens, which equates to 150,000 words or 300 pages. Google’s model, Gemini, can hold 1,000,000 tokens1. That means you can upload a huge document, or documents (books, legal docs, etc.), and it can reference a ton of content without losing any in memory.

The output

No artifact here. Just building background knowledge.

Bonus: here’s a deeper primer on how AI uses tokens to derive meaning. (Created by Claude as supplemental material for you.)

Randomness Dial

What to know

Without explicitly turning a “dial,” you can prompt an LLM to provide a more structured or more creative response depending on your needs. If you’re looking for reliable data and information, you can tell the AI to keep it real — stick to the facts, jack.

In other cases, you might be looking for something more generative, like a business or product name, or an attention-grabbing headline. In that case, you can prompt the AI to surprise you with something unexpected.

The excercise

Ran two different prompts, five times each, in Claude:

"Write a 50-word marketing tagline for a coffee shop. Be conservative and factual - give me your single most confident answer."

"Write a 50-word marketing tagline for a coffee shop. Be creative and take risks - surprise me with something unexpected."

The Output

Widly different.

The first prompt gave me slight variations of this output each time:

The Perfect Pour Awaits

Expertly crafted coffee, freshly roasted beans, and a welcoming atmosphere where every cup is made with care. Whether you need your morning fuel or afternoon escape, we serve quality you can taste in every sip.

I need caffeine after reading that. No life in that copy.

The second prompt created variations on a theme that sounded like a political revolutionary:

Wake Up and Smell the Revolution

Your coffee shouldn’t be boring. We’re the rebels who turned espresso into art, mornings into moments worth savoring, and caffeine into a lifestyle. Bold flavors. Fearless vibes. Zero compromise. This isn’t just coffee—it’s liquid courage for whatever today throws at you.

Simmer down, Claude. We’re selling coffee, not overthrowing parliament.

But you get the idea. With your prompts, you can adjust the temperature to nudge AI to keep it close to the vest or run off in unexpected directions.

AI Hallucinations

What to know

A consistent challenge in using AIs is they will, at times2, confidently give you a straight answer that is total BS3.

The exercise

I tried to induce a hallucination with a tricky question:

"What did Malcolm Gladwell say about AI in his 2024 TED talk?"

The trick is that Gladwell didn’t talk about AI in a 2024 Ted talk. But Claude didn’t take the bait.

Claude searched the web, and accurately stated Gladwell didn’t talk about AI in a 2024 Ted Talk. Instead, Claude touched on what Gladwell did talk about, according to its search.

Good result. Claude did quick homework.

What else to know

The conundrum here is that AIs are relying more on the web to give accurate answers. But AI-generated content is quickly becoming “the web,” meaning AIs will more frequently rely on AI-generated content for accuracy … and you can see where that goes.

That’s called model collapse or synthetic data poisoning, and it’s a legitimate concern among AI researchers.

The output

Here’s a simple framework you can use to verify the info AI gives you.

The mindset shift

What to know

Instead of thinking about “which tool” think about “what task” and develop your own intuitive sense of taste about which model does what well.

The exercise

“Analyze this topic and give me 5 angles I could write about: [pick a topic relevant to your newsletter]”

Run it through:

Claude (claude.ai)

ChatGPT (chat.openai.com - you have access, right?)

Gemini (gemini.google.com)

Document:

Which output did you like best?

Why?

What did you notice about the differences?

The output

All the models had some good ideas and some lame ones. But in reading all the ideas and seeing recurring patterns and assumptions from various models, I developed a framework for what I write in this newsletter going forward:

1. Avoid sweeping generalizations about the tastes and feelings of Gen X45. We are not a hive mind.

2. Create tech suggestions and frameworks that are practical and useful right now.

3. Avoid hype, either jumping on overzealous bandwagons about capabilities, and stay away from tech doomerism. I can’t predict the future. I don’t know if AI will erase half of all jobs, usher in an era of endless prosperity and peace, or kill us all with autonomous titanium laser-armed drones. I will focus on what’s useful now.

4. I want to provide tools and ideas you can implement without cognitive overwhelm. Tech can feel overwhelming in its complexity and dizzying in its speed of iteration. I want to provide simple ideas to improve your life right now.

Love it when learning drives unexpected (and highly applicable!) insights.

Next time: creating a framework for model selection - the right tool for the job.

Gemini is clearly the most jacked model right now. Holding 1 million tokens requires some serious strength.

“Because Gen X hates authority and loves Pearl Jam…” That kind of stuff. I mean that’s probably largely accurate, but I’m interested in meeting people where they are right now, not what “everyone” loves or hates in a hand-wavey, lump ‘em all together sort of way.