Beyond the Big 3: What I Learned Testing 5 AI Models in One Afternoon

A Chinese AI model just outperformed ChatGPT in my latest AI lesson. By a lot.

Quick test platforms

My lesson for today was about test-driving low-cost alternatives to the big three (ChatGPT, Gemini, and Claude).

For this test, I used Poe.com, which lets you jump between models on the free tier, until you reach your chat limit for that model.

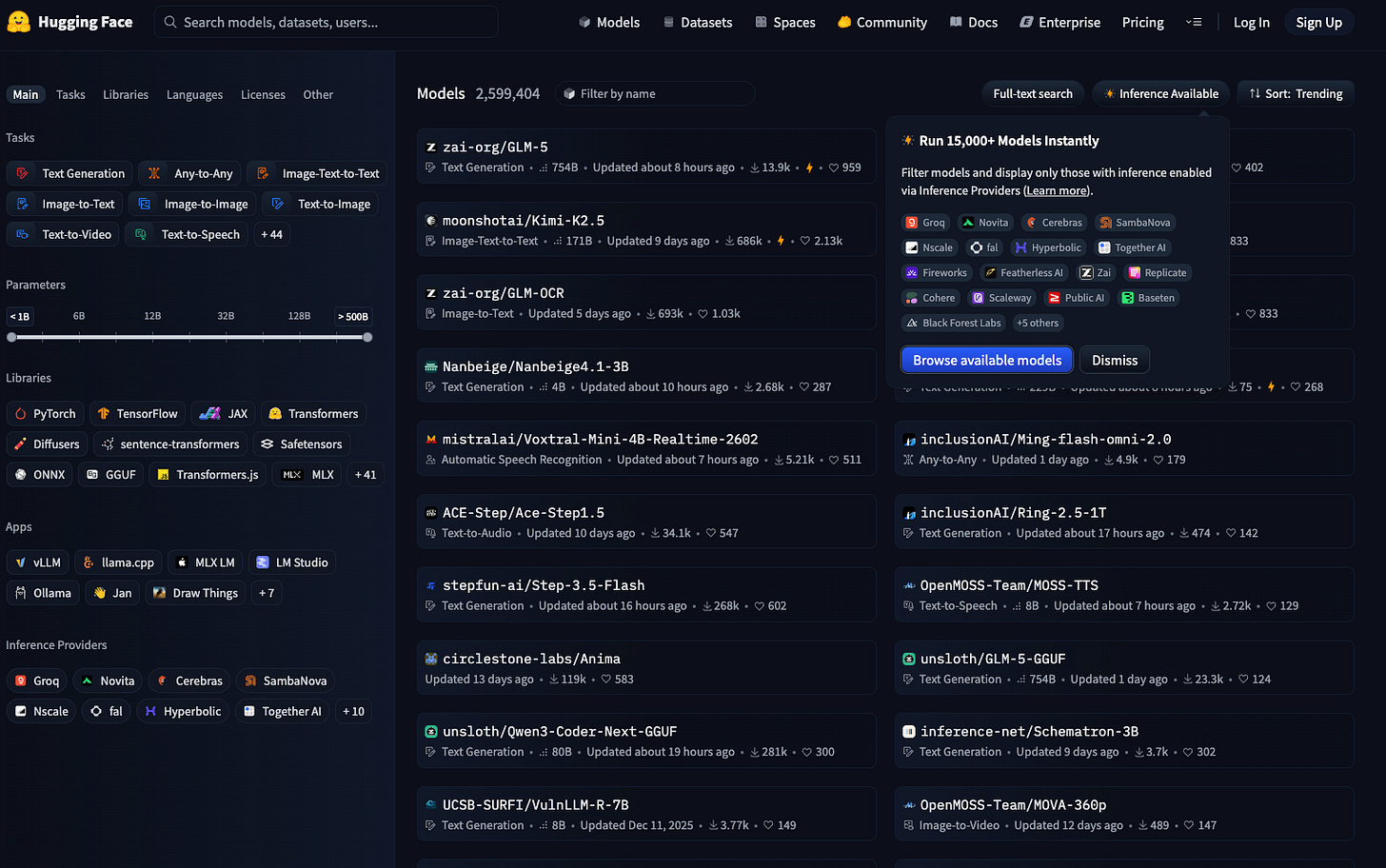

There are others. OpenRouter lets you work cheap—if you can get it to set up.1 Hugging Face is another, but there’s a lot going on there. This gives me instant brain freeze:

Don’t get overwhelmed.

You want to test drive a few models, not the entire fleet.

The prompt test

In AI lesson, Claude suggested the prompt, “Write a 200-word newsletter intro about why GenX professionals should care about open source AI models,” which I sent to:

Claude 4.6 Opus

GPT-5.2

Gemini Pro

DeepSeek V3

Llama 3.3

All through Poe’s easy-to-use interface.

The results

I wouldn’t use any of the results2 as-is. Lots of Gen X cliches:

“Using open source is like owning CDs!”

“Remember when we used to buy software on disk?”

But guess which model got the closest?

DeepSeek, the lowest-cost model among the paid AIs:

But there’s another path: open source AI models. Think of them like the early, independent internet—not owned by any one corporation. For us, the practical upside is twofold.

First, cost. Once set up, these models can run on your own systems, eliminating those growing subscription fees.

Second, independence. You’re not locked into a single company’s ecosystem, rules, or future decisions.

DeepSeek did gloss over something important here. From my understanding, you’re going to need a high-powered computer with lots of expensive RAM to run models locally, save for a few lightweight options.3 And of course, setup on your computer can be a challenge.

The takeaway

The more expensive models didn’t win by default. But without a quick and easy way to test, I’d never have known.

Model testing is easy and valuable. And the landscape is ever-changing, so keep testing from time to time. Have a standard prompt you check every few months, for example. Or use Poe to fact-check AI results across models, as they do tend to hallucinate.4

I couldn’t. It kept refusing my home address, so I couldn’t buy $5 worth of credits. Interesting business model.

For the record, I write my own stuff. I do have a custom editor project in Claude which has 200+ of my previous Substack posts in memory, and helps with flow, structure, and some sentence rewrites. I think we are all bionic writers now.